Ollama: Powerful Language Models on Your Own Machine

Large language models (LLMs) represent the forefront of artificial intelligence in natural language processing. These sophisticated algorithms can generate remarkably human-quality text, translate languages, write different kinds of creative content, and much more. Until recently, the computational power needed for these models made them inaccessible to most individuals. Ollama changes that, providing tools to run powerful LLMs on your own hardware.

What is Ollama?

Ollama is an open-source project that aims to streamline the setup and use of popular LLMs like Alpaca, GPT-J, and others. It offers a user-friendly interface, customization options, and tools to manage your models. With Ollama, you can tap into this exciting technology without extensive technical expertise.

Benefits of Running LLMs Locally

- Privacy: When you host an LLM on your own machine, your data and interactions remain private, unlike cloud-based solutions where data usage is less transparent.

- Cost-efficiency: Over time, deploying LLMs on your own computer may be more cost-effective than paying for cloud-based services.

- Customization: Running LLMs locally grants you greater control over fine-tuning models for specific tasks or experimenting with different parameters.

- Offline functionality: Local LLMs are accessible even without an internet connection.

How to Use Ollama

- Installation: Ollama provides a convenient install script. Visit the Ollama website (https://ollama.com/) for instructions.

- Model Selection: Ollama supports a growing list of popular LLMs. Browse the available models and choose one that best suits your requirements.

- Run the Model: Ollama offers a simple command-line interface to load and run your chosen model.

- Interaction: Send prompts or text inputs to the LLM and receive generated output.

Setting Up Ollama with Docker Compose

Docker Compose introduces flexibility and organization when working with Ollama. Here’s a basic example of a docker-compose.yml file:

services:

oll-server:

image: ollama/ollama:latest

container_name: oll-server

volumes:

- ./data:/root/.ollama

restart: unless-stopped

deploy:

resources:

reservations:

devices:

- driver: nvidia

count: 1

capabilities: [gpu]

networks:

- net

networks:

net:Services

- oll-server: This section defines a container named “oll-server” that will be based on the

ollama/ollama:latestDocker image (presumably the latest version of the Ollama software).

Image

- ollama/ollama:latest: This specifies the Docker image to use for the container. The

ollama/ollama:latestimage likely contains all the necessary software and configurations to run the Ollama LLM service.

Container Name

- container_name: oll-server: Gives your container a specific, easily identifiable name.

Volumes

- ./data:/root/.ollama: This creates a bind mount. Here’s how it works:

- ./data: A directory on your host machine called “data”. This directory should be created in the same location as your

docker-compose.ymlfile. - :/root/.ollama: A directory inside the container located at

/root/.ollama. - This mapping allows Ollama to store its data (likely models and configuration) within the “data” folder on your host machine, preserving the data even if the container is destroyed.

- ./data: A directory on your host machine called “data”. This directory should be created in the same location as your

Restart Policy

- restart: unless-stopped: This instructs Docker to restart the container if it fails or exits, unless you manually stop it. This helps ensure your Ollama service remains available.

Deploy (Resource Allocation)

- resources:

- reservations:

- devices:

- driver: nvidia: Reserves an NVIDIA GPU for the container’s use.

- count: 1: Requests a single GPU.

- capabilities: [gpu]: Grants the container the necessary permissions to access and utilize the GPU.

- devices:

- reservations:

Networks

- networks:

- net: Connects the container to a network called “net”.

- net:

- external: true: Indicates that “net” is an existing network created outside the scope of this

docker-compose.ymlfile. Your container will join this pre-existing network.

- external: true: Indicates that “net” is an existing network created outside the scope of this

In Summary

This docker-compose.yml file configures a Docker container to run the Ollama service. Crucially, it does the following:

- Utilizes an NVIDIA GPU: The resource allocation ensures the Ollama service can use a compatible GPU, necessary for the performance of many large language models.

- Preserves data: The volume mapping helps persist model data and configurations.

- Connects to an external network: The container connects to a network, presumably for communication with other services or the outside world.

Running with Docker Compose

- Ensure you have Docker and Docker Compose installed.

- Save the configuration above as

docker-compose.yml. - In your terminal, navigate to the directory containing the file and run:

docker-compose up -d.

Models

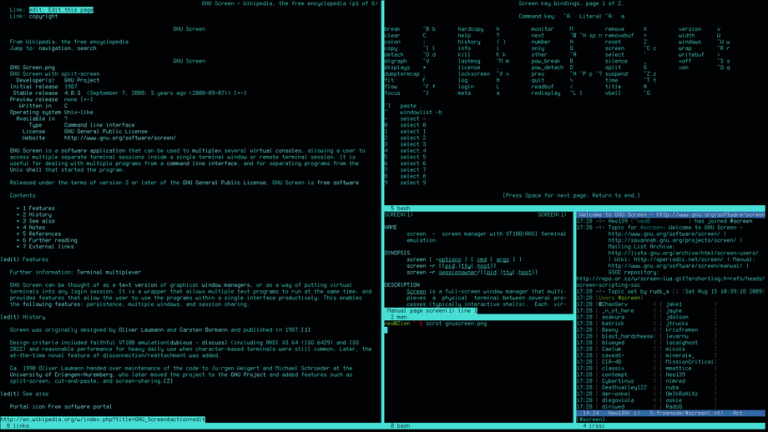

- Exec into the Container:

- The

docker exec oll-server ...command allows you to execute a command inside your running Ollama container (namedoll-server).

- The

- Ollama Run:

ollama runis the core Ollama command to actually load and run a specified model.

- Model Identifier:

gemma:7bspecifies the model you want to run. This format likely refers to:- Model Name: ‘gemma’

- Size or Variant: ‘7b’ (possibly indicating a 7-billion parameter version)

Full Command Explained

The command docker exec oll-server ollama run gemma:7b tells Docker to:

- Enter the running container named ‘oll-server’.

- Inside the container, execute the Ollama command to run the model named ‘gemma’ (likely with the 7b variant).

Important Notes

- Model Availability: This command assumes the ‘gemma:7b’ model is either already downloaded and stored within your Ollama container or that Ollama can fetch it from a model repository.

- Potential Errors: If you encounter errors, ensure:

- The model name and variant are correct.

- Your container has sufficient resources (RAM, GPU memory) to run the model.

- You are in the correct directory (where your

docker-compose.ymlfile is if you’re using one).

How to get models:

Find more models for ollama at : https://ollama.com/library and install them to your local machine by running their commands e.g.

- ollama run mixtral

- ollama run llama2

- ollama run llama2:70b

- ollama run llama2:70b-chat

- ollama run command-r

Advanced Usage and Considerations

- Hardware Requirements: LLMs can be computationally demanding. Ensure your system has sufficient RAM, a powerful GPU (with CUDA support for NVIDIA), and ample storage for large model files.

- Fine-tuning: Ollama offers options to fine-tune models on your custom datasets, giving them specialized domain knowledge.

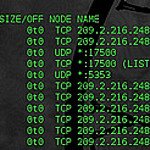

- API Development: For integration into applications, you can build a REST API around your Ollama installation.

Conclusion

Ollama democratizes the use of advanced language models. With its simplified setup and management, it unlocks the potential of LLMs for developers, researchers, and enthusiasts. Whether you’re exploring AI, building creative tools, or streamlining workflows, running LLMs on your hardware offers unparalleled privacy, customization, and control.